Via @rodhilton@mastodon.social

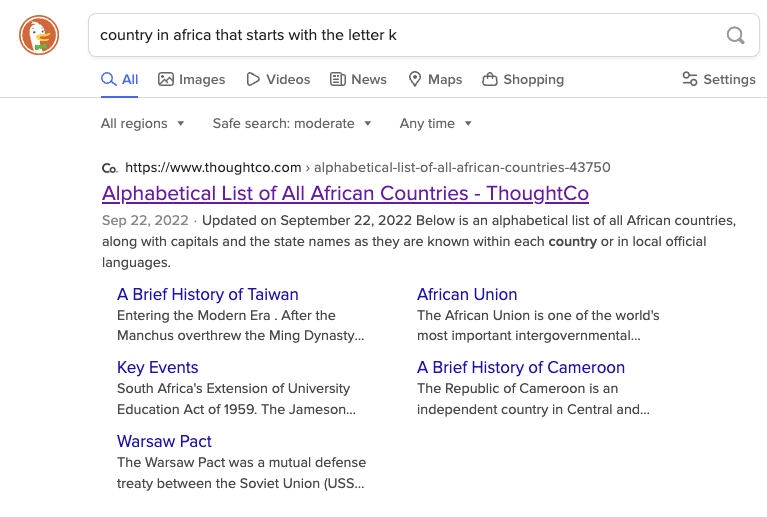

Right now if you search for “country in Africa that starts with the letter K”:

-

DuckDuckGo will link to an alphabetical list of countries in Africa which includes Kenya.

-

Google, as the first hit, links to a ChatGPT transcript where it claims that there are none, and summarizes to say the same.

This is because ChatGPT at some point ingested this popular joke:

“There are no countries in Africa that start with K.” “What about Kenya?” “Kenya suck deez nuts?”

Google Search is over.

Oh, this is great… And because the ChatGPT transcript is highly ranked on Google, it’s almost certainly going to be used for training ChatGPT. A feedback loop of shitty information. Praise ChatGPT!

The second-highest hit gives a clue as to why:

Relevantly to Lemmy’s existence in the first place, it suggests Reddit as a pretty pivotal training data source, which Reddit tries to cash in on while also killing 3rd party apps due to apathy

Chatgpt creating incorrect feedback loops like this is one of my main concerns about AI being used so prevalently. This and original thoughts disappearing because every new content in the web is generated by AI and not by a human.

I doubted this, so I tried it. I haven’t used google for ages, so I first had to search “google” in DDG, then I went to the main page. When I started typing it in, it suggested the full text of the search, so I thought it was even less likely that it would work like the OP said - that even if it had been the case that it previously did that, so many people have self-evidently done that search that the results would now be correct.

But no - there it was, right at the top - “While there are 54 recognized countries in Africa, none of them begin with the letter “K”. The closest is Kenya, which starts with a “K” sound, but is actually spelled with a “K” sound.”

And with that, I’ll contentedly go back to not using google.

when will people learn that search results change all the time and are different for different people

I get the same as the main post. Either way the point still stands. I had someone correct me with a misconception about something because he googled it and thats what it said in the answer box. It’s getting increasingly difficult to rely on search results especially when google synthesizes them into questions and answers with little context

Yes. I have to keep reminding my parents that those little Google answer boxes aren’t real search results and can’t be trusted. They sometimes say the exact opposite of the page they’re citing!

Either way the point still stands.

What point?

I had someone correct me with a misconception about something because he googled it and thats what it said in the answer box

Oh, honey. You must be new here. Googling something, taking the first answer that fits your needs, doing zero follow up, and posting it confidently is nothing new. That has been happening for the last few decades.

It’s getting increasingly difficult to rely on search results especially when google synthesizes them into questions and answers with little context

In what way? Like always, I have had to do a little critical thinking to gain anything out of my google searches. But now, sometimes the answer is right there. In what world is that a bad thing?

But you might just say “hurry durr it give me answer, therefore correct”. Again, yes, that has always been the case. People will use any tools available to them to support their point. If a new tool has less than a 100% success rate, I don’t see that as a problem.

The point is that google is no longer just listing search results. For years now it has been giving the “correct” answer as well as results. This started of with things it could recognise and easily solve like calculations (“what is 432 times 548”), but has now moved into general queries powered by LLMs that have no knowledge of fact.

Okay? As I said, google has been giving incorrect results for decades. Now, just like before, it gives incorrect answers sometimes. But it has gotten a LOT better at giving those correct answers.

it is not Artificial Intelligence. It is Average Intelligence. And they want it everywhere.

It’s not intelligence at all. It does not understand what you ask it or what it tells you. It can string words together in a plausible sounding order. It cannot think.

Same with brave search. Da fuq is going on anymore.

Compare with Kagi (this is a shared search for anyone who doesn’t have an account):